In this guide, we illustrate how to extract Cohen’s f 2 for two variables within a mixed-effects regression model using PROC MIXED in SAS ® software. CALCULATOR F STATISTIC MULTIPLE REGRESSION SOFTWAREUnfortunately, this measure is often not readily accessible from commonly used software for repeated-measures or hierarchical data analysis.

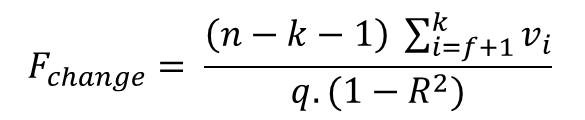

One relatively uncommon, but very informative, standardized measure of effect size is Cohen’s f 2, which allows an evaluation of local effect size, i.e., one variable’s effect size within the context of a multivariate regression model. To see how the F-test works using concepts and graphs, see my post about understanding the F-test.Reporting effect sizes in scientific articles is increasingly widespread and encouraged by journals however, choosing an effect size for analyses such as mixed-effects regression modeling and hierarchical linear modeling can be difficult. If the P value for the overall F-test is less than your significance level, you can conclude that the R-squared value is significantly different from zero. The overall F-test determines whether this relationship is statistically significant. While R-squared provides an estimate of the strength of the relationship between your model and the response variable, it does not provide a formal hypothesis test for this relationship. Therefore, if the P value of the overall F-test is significant, your regression model predicts the response variable better than the mean of the response. In the intercept-only model, all of the fitted values equal the mean of the response variable. There are a couple of additional conclusions you can draw from a significant overall F-test. For example, a significant overall F-test could determine that the coefficients are jointly not all equal to zero while the tests for individual coefficients could determine that all of them are individually equal to zero. However, in a few cases, the tests could yield different results. Typically, if you don't have any significant P values for the individual coefficients in your model, the overall F-test won't be significant either. Great! That set of terms you included in your model improved the fit! If the P value for the F-test of overall significance test is less than your significance level, you can reject the null-hypothesis and conclude that your model provides a better fit than the intercept-only model. In Minitab statistical software, you'll find the F-test for overall significance in the Analysis of Variance table. Alternative hypothesis: The fit of the intercept-only model is significantly reduced compared to your model.Null hypothesis: The fit of the intercept-only model and your model are equal.

The hypotheses for the F-test of the overall significance are as follows: A regression model that contains no predictors is also known as an intercept-only model. It compares a model with no predictors to the model that you specify. The F-test of the overall significance is a specific form of the F-test. Unlike t-tests that can assess only one regression coefficient at a time, the F-test can assess multiple coefficients simultaneously. In general, an F-test in regression compares the fits of different linear models.

Recently I've been asked, how does the F-test of the overall significance and its P value fit in with these other statistics? That’s the topic of this post! I’ve also written about how to interpret R-squared to assess the strength of the relationship between your model and the response variable. Previously, I’ve written about how to interpret regression coefficients and their individual P values.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed